Članki » Intervjuji » Interview with Peter Van Eeckhoutte

Interview with Peter Van Eeckhoutte

Introduction:We continue our series of interviews with a slightly »unusual« talk this time: Peter Van Eeckhoutte may be unknown to readers who don't follow the InfoSec scene on a daily basis. But he is well known to the international security community and his name is climbing fast on the list of top security researchers. He's the author of many tutorials related to buffer overflow exploitation on Windows platforms and also the driving force behind Corelan Team, a group of security enthusiasts with a common goal: researching security vulnerabilities.

Slo-Tech: Please introduce yourself to our readers. The usual stuff: education, current job, programming skills, etc.

Peter Van Eeckhoutte: Hello folks, my name is Peter Van Eeckhoutte (a typical Belgian name, so don't even try to pronounce my last name :-)), aka. »corelanc0d3r« I finished my Bachelor degree in applied Information Technology in 1998. Development environments/languages such as Clipper, C++, Cobol and RPG were still hot back then (and I believe if you are still fluent in Cobol and RPG, you can make a lot of money nowadays). While most part of that education was focused on analysis and development in general, I quickly came to realize that programming all day long is not what I wanted to do. I had already gained quite some experience with computer hardware and networking at that time, so I started my first job as Technical Services Manager in a local computer shop, in 1997, combining a full time job with full time school. In an attempt to make my own life (and the lives of my co-workers) a bit easier, I tried to take advantage of my programming/scripting background and wrote a number of scripts and tools (VB5 and 6, asp, etc), in an attempt to automate administrative and technical processes.

Slo-Tech: When did you became involved in computer security and what was the motive?

Peter Van Eeckhoutte: One of the computers that was brought in by a customer appeared to act somewhat strange. After spending a while on figuring out what was wrong, I discovered an instance of »Back Orifice« on that computer. I had some experience with programming, so I already experienced the excitement of being able to program a computer and make it do what you want it to do. But after seeing how applications and operating systems can be abused, I got thrilled even more. The Back Orifice »incident« took the concept of »being able to make an application or computer do what you want it to do« to another level It really shifted my attention from just programming to security. In terms of motives: I guess learning and wanting to know how things work are just a natural thing to me, so I cannot really call that a motive... It just happened.

Slo-Tech: Who are security researchers/people you really respect and had an enormous impact on your current security related work?

Peter Van Eeckhoutte: There are a lot of security researchers I really respect. Anyone who took/takes the time to do decent research and share their findings with the world deserves a lot of respect. Without that research and without having the results/techniques available on the internet, I wouldn't probably know what I know today. Unlike certain other areas in IT, learning how to »hack«, how to exploit, etc. is very hard if you have to do it on your own. It requires a specific way of thinking, a different mindset, and of course a lot of background knowledge on how things work. So big kudos to the folks that have put a lot of time and efforts in researching.

Slo-Tech: Peter is well known on the security scene for his excellent buffer overflow exploitation tutorials. Especially because of his unique approach in presenting complex topics in clear and easy-to-follow way. What was the main influence that pushed you into that writing-style direction?

Peter Van Eeckhoutte: While I started learning about buffer overflows myself, I found a lot of information on the internet, or in books. So I'm obviously not the first one (and I don't pretend to be either) to write about these subjects. Most of those resources have a problem though. The first part of the tutorial or books are OK. But then a jump is made. It's hard to identify/qualify what that »jump« consist of or what it is for that matter, but it was quite effective because it stopped me (and many other people I know) from working our way through these tutorials. I guess it kinda ties back into the way certain tutorials and books are written, and the fact that some details are left out. OK, one could argue that it's all about »trying harder« and showing some perseverance as well, but let's be honest: when you've just started the learning process, a little help (as opposed to an unnecessary gap to close) is much appreciated. After all, we are all humans and nobody was born with all the knowledge and the talents to learn everything by yourself.

Anyways, to cut a long story short: this triggered me to start writing my own set of tutorials and basically tell my own story. My suggestion is: read the tutorials, and then read the books. It might help you understand the books because they are, in fact, great resources. And if you feel that some of the tutorials lack some steps or details, let me know. I'm open to suggestions and feedback.

Slo-Tech: Don't you think that classic buffer overflows on the stack are becoming very hard to reliably exploit on modern OSes? Are overflows the next bug-class that are going to be »extinct« or is there still plenty of possibilities to exploit them?

Peter Van Eeckhoutte: First of all, I don't believe in a user-friendly/usable yet 100% fully secured system. If you can install and execute code, you'll most likely going to be able to find, abuse and exploit flaws as well. Look at the amount of efforts that have been put into securing operating systems, compilers, and the stack in the last 10 years, and look at the facts today.

People still find and publish exploit code for stack based overflows every day, despite all the hard work/protection mechanisms. So the good old stack overflows are not dead at all. Stack cookies can be bypassed, ASLR can be bypassed, NX can be bypassed, a lot of applications don't even use /GS, or use 3rd party shared libraries that are not /SafeSEH protected, etc. Despite all that hard work, I don't think these type of bugs are going to dry up any time soon. And even if they do, the next-in-line-family-of-bugs will just present themselves and continue to achieve the same result: system compromise. Or old bugs, perceived as just »DoS« vulnerabilities, might al of a sudden become exploitable because of some clever new techniques. Think about unicode bugs or null pointer dereferences. For a long time, people assumed that these kind of bugs are not exploitable. Hell, a lot of people still think they are not exploitable. I even had a talk about this with FX (from Phenoelit) - up to today, a lot of people still think that unicode overflows are not exploitable... That's sad. Anyways, it only proves that trying to find new techniques for old bugs pays off.

Also: security research is not just for fun. It's a business, for both »white« and »black« hats. As long as people can make money out of it, they'll continue researching it.

Slo-Tech: You are also the leader of Corelan Security Team, a group of security enthusiasts that deals with security research mostly for fun and the learning experience. Can you describe how communication between team members works? Is CT also collaborating with other groups or maybe even with renowned security researchers?

Peter Van Eeckhoutte: Ah well – »leader« is a big formal word... I just founded the blog and started the team, but I don't consider myself to be on another level than anyone else in the team. I respect each and every member for their input and talents. We are peers and we just share common interests: learn and share. Of course, because of the fact that everybody has their daytime jobs, and because of time zone differences, it's really hard to get all members together to have a chat. But despite those hurdles, we have been quite successful in cooperating, using common communication techniques: email (internal mailing list), IRC (private server of course), etc.

We are currently not cooperating with other groups or researches ... not because we don't want to, but our values are very important and we obviously don't control what the other people will do. Also, when a vulnerability is discovered, it is normal that people find some kind of satisfaction and pride in finding new things and sharing them amongst a small group of people before going public with the details. Obviously, that does not mean that we don't respect other groups or researchers. Not at all.

Slo-Tech: What tools are you using for your research? Did CT members wrote any of these tools? Also, does CT use any private, internal, as-of-yet unreleased tools in their research?

Peter Van Eeckhoutte: We mainly use what anyone else uses, but sometimes a good custom script will do things faster :-) Let's say if we stumble upon a »structural bug« in a certain file format. What usually happens next is: we build a little framework/script that will help us find similar bugs in other applications. There's nothing really secret about it. After all, research and vulnerability development is not about tools. Tools will help you do things faster, but you really have to be creative and use your brains first.

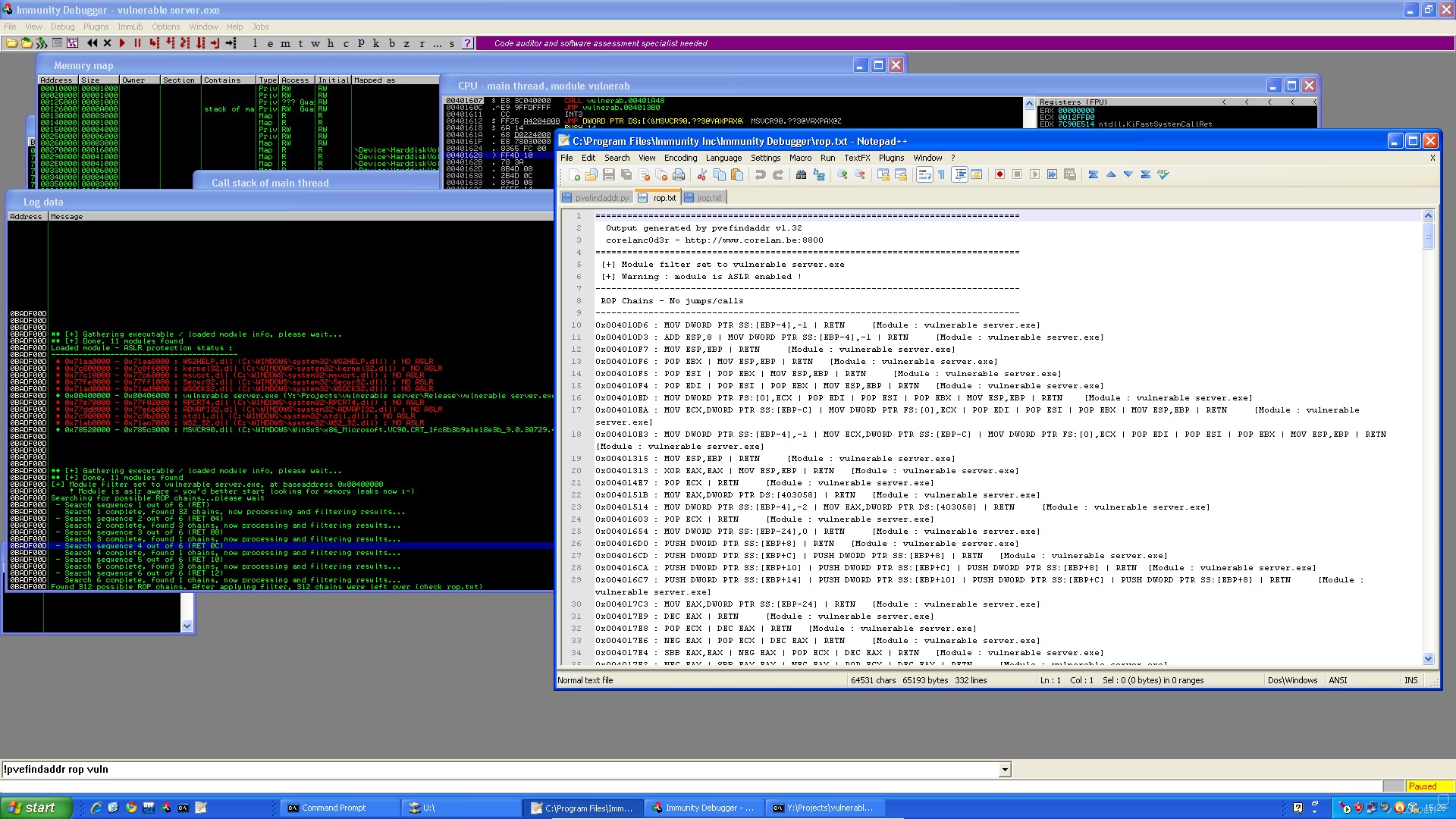

Another example of a script/tool that will help people to analyze crashes and build exploits faster and more reliable is »pvefindaddr«, a plugin (PyCommand) I wrote for Immunity Debugger. This script can be downloaded from the Corelan website.

While this tool is public, we obviously test new versions, new features of this script in our team first. We are currently testing a feature that should help building ROP payloads, so stay tuned!

Other than that, we don't use anything special or secret. Sorry to disappoint you :-) The best and most valuable tool out there, is just common sense. Tools will help you achieve a goal, but the brain needs to set the goal and the path first.

Slo-Tech: What is the standard CT procedure in reporting bugs?

Peter Van Eeckhoutte: I think we use a procedure/process that is frequently used by a lot of people: first, we contact the vendor and try to convince him (yeah, I know, sounds bad doesn't it) that we want to help them fix the bug. That first email does not contain technical details. It only contains a link to our disclosure policy and indicates the type of bug we found. We basically give them 2 weeks to reply and indicate that they want to work with us. (That does not mean that they need to fix the issue in 2 weeks of course). If they reply, they are given the chance to fix the bug, release a patch, and then we go public. The only thing they need to do is keep us posted once in a while, while they are fixing the bug. If they don't reply we send them a reminder. If they ignore the reminder as well, we go public. Simple, but fair.

Slo-Tech: Did CT ever had any problems with companies making legal threats? If so, how do you deal with situations like this? Do you have any legal advisor?

Peter Van Eeckhoutte: Haha - very good question. In fact, just a few weeks ago we had an issue after having approached a company in Denmark. We told them that there was a bug in their application and they replied back that:

- first of all, they don't agree with our policy and

- second, they will take legal actions against us.

I guess many people may have encountered a situation like this before, but it was the first time for me... and I was shocked. Think about it. We are doing you a favor, Mr. developer, and you want to sue me for that ?

So I did some basic research (spoke with a lawyer, with someone from the Computer Crime Unit in Belgium, and with people from EFF), and concluded that there is nothing wrong about what we are doing:

- We did not obtain the software in an illegal way. The trial version can be downloaded for free from the vendor website.

- We did not compromise any 3rd party systems in order to find or exploit the bug.

- My fundamental right on »Freedom of speech« allows me to put research I've done on my website.

Of course, all of that is based on assumptions. I simply don't have the budget to hire lawyers, but I'm pretty confident that the way we approach things is not illegal in any way.

Anyways, I went back to the developer, explained him that I still wanted to help him and asked him why he has been so aggressive. He told me that he does not care about that application any more (but he is still selling it on his website), and wonders why I've put efforts into looking for a bug in that particular application, because »everybody uses the application inside a secured perimeter«... (he was basically saying, that nobody installs the application on a computer directly connected to the internet and he was obviously not considering insider threats). Result: I closed the case, and published the details.

This is a perfect example why people might consider stop doing responsible disclosure. But I have to be honest... I have received many emails from vendors/developers (small companies and big companies), honestly thanking us for caring about doing things in a responsible way. So, to all security researchers in general, if you are serious about doing responsible disclosure... Don't give up. Remember the good ones, and use your anger over the bad ones as motivation to find more bugs, not to give up :-)

To all vendors/developers in general: guys, we are doing you a favor. Don't shoot us, because you are the one who made a mistake in the first place.

Slo-Tech: Can anyone become a member of CT or is there a strict selection? How does this selection process work? Are there any sanctions for abusive members?

Peter Van Eeckhoutte: CT is not a secret society or community. But that does not mean that it's open for anyone to join. After all, we are dealing with possible sensitive 0-day information, and we have some important »rules« to follow. Rules and values all members of the team care about. By just allowing anyone in the team, we would jeopardize those values, and that is a risk I'm not willing to take. One of the values we all think are very important, is learning/sharing and helping other people. So if you are just looking for some help, you can simply use our forums to post questions - any questions really (yes, even the newbie questions), and we'll try to help. If you are into l33t 0-day bugs and nifty exploits only then I have to disappoint you (again): we don't think the exploit itself is the only thing that matters. It's all about the bug, not about the exploit. The exploit might help people to convince other people that a bug is exploitable. But, the only thing that really matters is the bug itself.

Back to CT: we have a fairly good amount of members in the team. Finding a good balance between less members (less input) and more members (more risk of unwanted disclosure) is a tough exercise. What we usually do, if we need additional members, is just monitor certain repositories, IRC channels, forum posts etc, and try to figure out if those people would fit the profile, based on their past research and actions.

Regarding abusive members: we really cannot tolerate/take the risk of having an information breach or conflict of interests. The entire team is based on mutual respect and trust, so if the trust relationship is broken, the story ends for that member. On the other hand, I also believe in second chances - so let's say we evaluate incidents on a case-by-case basis.

Slo-Tech: Did Corelan Team ever sell 0-day bugs to resellers? Would you sell a high profile 0-day bug? Also, would you disclose the bug publicly - suppose the vendor had no interest to patch it - even if you knew that this will put many end-users to risk?

Peter Van Eeckhoutte: No, we've never sold any bugs. One of the reasons for not selling bugs is that it would be hard to split the money amongst all members, without triggering discussions, (which may result in people leaving the team). It's not worth it. Respect, trust, friendship and good values are more important and more valuable than bugs. So basically, not going after the money, keeps us on track in terms of motives and values.

I don't have a problem with people who sell bugs, as long as they don't have an issue with me doing responsible disclosure :-) When we contact a vendor about a certain bug, we really try hard – if necessary - to convince the vendor that the bug will put their customers at risk. If that doesn't do the trick, I don't have a problem going public with it. It might conflict with the concept of responsible disclosure, but we actually try hard to convince people and if they don't care, I guess customers should know about this as well and consider using different software. After all, the bug may already been known in the »underground« scene - if we don't disclose it, someone else might.

Slo-Tech: Do you believe in Responsible disclosure? Do you think it works?

Peter Van Eeckhoutte: I strongly believe in it, but that's just my personal opinion. As I stated earlier, I think we should keep our focus on bugs, not on exploits. We are here to help and to make things safer, not to prove that we can write a fancy exploit and show off. The reality of disclosure is that, whatever type of disclosure you prefer, it will always happen after the facts, after a bug was introduced in code, and discovered by you - the researcher. So if you have the option to at least give a vendor the chance to fix it before you make the details public, then I guess that makes sense. Stating that they wouldn't care or would not fix it in a short amount of time anyway is not fair I think. You don't really know unless you try, unless you give them a chance to reply and work on getting the issue fixed. Of course, nobody can predict the vendor reaction in advance, or if they would fix the bug faster or not when you choose to disclose the bug to the public without telling them)

But it does not really matter, because I also realize that, in 99,99% of the cases, if you get lucky, the developers will fix that particular bug. Sadly, nobody will do a full source code audit because of the fact that you disclose a bug. But they are not going to do a full source code audit for sure if nobody reports them anything. And on top of that, the bug might not get fixed either. After all, not all vendors monitor security mailing lists... So, at least, by addressing them directly, we give them the chance to fix the bug before it's being exploited (if not already). Just like many other people, I am running al kinds of large and small apps myself on a daily basis (well known and less known apps) and I would very much like to get a decent patch before the bug is being exploited. So that's why IMHO, I think responsible disclosure is a fair deal.

Sadly, regardless of what people do in terms of disclosure, we are still miles away from solving the root cause of many bugs in most cases. Still a good amount of bugs are present in applications, not because the developer intentionally introduced the bug, but because he may not know how to write secure code. That is a fact, and until secure coding becomes part of every single development training (at school, etc), it is not going to change any time soon.

OWASP, for example, is really trying to build partnerships with schools and institutions alike... That really is a good initiative and deserves a lot of respect and support. But we are still not nearly where we need to be. So perhaps, security researchers should establish a partnership with companies that can offer secure code training, and should mention this in the advisories that are sent to vendors. So instead of just fixing the bug (of course, based on the type of the bug), we could tell them to fix the bug and get their developers trained, and offer them some options. In fact, this sounds like a plan - if any company is willing to establish a partnership with Corelan Team, contact me - I'm sure we can work something out so we can earn a few bucks to get our hosting and internet connectivity bills paid :-)

Slo-Tech: Corelan Team recently disclosed a series of advisories related to bug in processing .zip file format. Can you please elaborate on how the bug was found and exploited?

Peter Van Eeckhoutte: I guess we just stumbled upon it. While doing some research on file formats in general, one of our members (mr_me) documented the zip file format and realized that one of the header fields refers to the size of the filename inside the zip file. So he just increased that specific header field (size) and made it larger than what would be accepted by the OS, fixed some offsets in the format and basically put an overly long filename inside a zip file. It was amazing to see how many zip apps just crashed over this. Most big, »A class« level applications are OK, but a scary amount of apps are not.

Slo-Tech: Do you think it's easier to find bugs with static analysis (source code review) or dynamic analysis and fuzzing? Which approach is Corelan Team using?

Peter Van Eeckhoutte: I don't think either one of the techniques is easier. If a tool makes it easier to deploy a certain approach, then more people will start using that tool, and the number of bugs that can be found will decrease (because vendors will start using the same tools as well to find and fix bugs). Certain tools can make the fuzzing process easier, but your success ratio might go down after some time.

Source code is not always available, especially not for most commercial applications. Even if source code is available, code audits can be extremely challenging. It's easy to miss a certain vulnerability: a 200 line application can contain a vulnerability and you may have to work on it for a day to find it. At the end, if you think the source code is fine, a compiler/linker might introduce a bug and it would be »game over« as well. The good news is: if you have the source code and if there is a bug, you will most likely find it if you spend enough time on it. Success guaranteed.

Reverse engineering (either application RE or patch RE) is another approach, but – pretty much similar to source code auditing – you really need to master the technique and language before you can spot bugs. Very tough, but if you master it, success is guaranteed.

I know there is a lot of discussion about fuzzing – some people say that dumb fuzzing is not effective anymore (but at the same time, big bugs in big applications are still discovered using just a few lines of code). Others say that you really need to document the file format or network protocol and play with the fields/bits/values and see how the application responds to that (smart fuzzing). This approach works as well. Of course, you'll have to figure out how formats/protocols work, and you may have to build your own fuzzer, or use an often complex framework to get the fuzzing done. This approach will often provide better results than dumb fuzzing. Many application will already handle random data input well, but that does not mean that they will deal with specially crafted input in certain fields. You'll most likely have more success with this approach than with dumb fuzzing.

Newer techniques include a combination of static analysis, pinpointing the interesting functions, and perform in-memory-fuzzing using data tainting. Sounds complex, and it is complex, because there are many obstacles in the way. Anyways, I'll let the fuzzing experts figure out how to deal with this, because it's not clear if this theory will provide actual results.

It is clear that all of those techniques require specific knowledge and lots of time and dedication. Hey, »hacking« sounds like fun, but it is a tough and time-consuming job kids !

CT is using a combination of dumb and smart fuzzing, and some source code auditing. We don't really have enough RE expertise at this point in time, so those 3 techniques are the only/easiest ways to hunt for bugs. Many tools are available to do this, and it's relatively easy to build your own tools if necessary.

Slo-Tech: You attended the BlackHatEU conference in Barcelona in April. Can you describe to our readers how this conference looks like? Any new exploit techniques disclosed?

Peter Van Eeckhoutte: The BlackHat conferences really offer a good combination of high-level and detailed/technical briefings. This year's conference had 3 tracks (as opposed to 2 in previous

editions), which made the choice harder, but it allowed presenters to go quite deep at the same time. What I like about the conference is the atmosphere and environment. Apart from the fact that you get to meet some people you have been communicating with over the year, I was pleasantly surprised (again) that ego's (if any) were left at home, and everybody was kind, friendly, open for a chat.. That was pretty cool.

In terms of (new) exploitation techniques, there were some really interesting talks:

- Clickjacking

- Oracle padding attacks

- Universal XSS

- Oracle MITM

I also really liked the talk about Adobe Heap Management, but to me - the one that stood out above the others - was the one that FX did (about the defense part of Adobe Flash file format vulnerabilities). We should see more people spending time offering good / structural solutions for certain vulnerabilities.

As explained earlier, there still is a huge amount of work to do in order to get bugs fixed. So instead of just hammering on the vendors, we should continue to hammer, but start thinking about generic protection mechanisms at the same time. I know it's the world upside down, but it might be the only solution in the short run.

Slo-Tech: It would be really sad if such good tutorials like yours were lost. Do you have any plans to release them in paper format? Can we expect any new tutorials soon?

Peter Van Eeckhoutte: One of the plans I have for 2010-2011 is reviewing the tutorials, adding some newer techniques, and see if I can publish them in paper-format. But before I can do that, I need to complete my work on Win32 exploitation, which includes heap overflows and ROP (Return-oriented Programming). Lots of work to do - and yet so little time :-) Stay tuned !

Slo-Tech: What would you suggest to kids that are starting with security researching?

Peter Van Eeckhoutte:

- Start by learning how things work before starting to learn how to break things.

- Be prepared to invest a LOT of time, concentrate and don't give up.

- Start from scratch, from the basics, don't try to dive if you don't know how to swim first.

- Ask the right questions at the right time.

I know we have been seeing some really cool techniques lately (ROP, heap spraying, etc), but if you don't know anything about exploitation, don't try to do this yourself. Just accept that you need to learn the basics first and you'll get there eventually. In other words, if you don't know how the basics of buffer overflows work, don't try to do heap spraying.

Slo-Tech: The usual question for the end: does CT have any 0-days in their repository? Can you share some details? :-)

Peter Van Eeckhoutte: Yeah, we still have some 0 days, but If I tell you now, it wouldn't be a 0-day anymore :-)

Thanks to Peter Van Eeckhoutte for the interview.

Brad Spengler (PaX Team/grsecurity) interview

Slo-Tech: Introduce yourself to our readers (job, education, interests, etc) and please explain if your real surname is Spender or Spengler :-) Also, was Brad ever member of any Black hat group? Brad Spengler: Brad Spengler (not Brad Spender), though the similarity in the names isn't a coincidence, ...

Interview with Dustin Kirkland, Ubuntu Core Developer about encryption in Ubuntu

Dustin Kirkland is an Ubuntu Core Developer, working for Canonical on the Ubuntu Server. His current focus is developing the Ubuntu Enterprise Cloud for the Ubuntu 10.04 LTS release, but previously he had worked on a number of Ubuntu features and packages, including Ubuntu's Encrypted Home Directories. ...

Interview with HD Moore

HD Moore is probably known to everyone who is following information security field. HD is author of many projects (www.digitaloffense.net) including Metasploit framework, that was recently acquired by Rapid7. He shared his experience with this transition process at this years Black Hat DC conference ...

Interview with Rafal Lukawiecki

Slo-Tech: Can you please introduce yourself? Rafal Lukawiecki: Absolutely. My name is Rafal Lukawiecki and I work for Project Botticelli Ltd., which is a small consulting company based in Ireland. Over there I specialize in [st.link http://en.wikipedia.org/wiki/Business_intelligence business intelligence] ...

Interview: Gary Kovacs

Slo-tech: Can you please introduce yourself? Gary Kovacs: Yes. I’m Gary Kovacs, CEO of Mozilla. [st.slika 48180] Slo-tech: How do you feel after these two months of being CEO of Mozilla? Gary Kovacs: It’s actually been three and a half weeks and I feel great. It is as I expected it ...